At its core, Edge AI is the combination of Edge computing and Edge intelligence to run machine learning tasks directly on end devices which generally consists of an in-built microprocessor and sensors, while the data processing task is also completed locally and stored at the edge node end. The implementation of machine learning models in edge AI will decrease the latency rate and will improve the network bandwidth. Edge AI helps applications that rely on real-time data processing by assisting with data, learning models, and inference. The edge AI hardware market valued at USD 6.88 billion is expected to reach USD 39 billion by 2030 at a CAGR of 18.8% as per the report by Valuates Reports.

The advancement of IoT & adoption of smart technologies by consumer electronics and automotive among others are fuelling the AI hardware market forward. Edge AI processors with on-device analytics are going to enhance the opportunities for the AI hardware market. NVIDIA, Google, AMD, Lattice, Xilinx, and Intel are some of the edge computing platforms providers for such cognitive AI applications design. Furthermore, the advancement of emerging technologies such as deep learning, AI hardware accelerators, neural networks, computer vision, optical character recognition, natural language processing, etc. opens all-new horizons of opportunities. While businesses are rapidly moving towards a decentralized computer architecture, they are also discovering new ways to use this technology to increase productivity.

What is Edge Computing?

Edge computing brings the computing and storage of data closer to the devices that collect it, rather than relying on a primary site that might be far away. This ensures that data does not suffer from latency and redundancy issues that limit an application’s efficiency. The amalgamation of Machine Learning into edge computing gives rise to new, resilient, and scalable AI systems in a wide range of industries.

Myth: Will Edge Computing suppress Cloud Computing?

No, edge computing is not going to replace nor suppress cloud computing, instead, the edge will complement with cloud environment for better performance and leverage machine learning tasks to a greater extent.

Need for Edge AI Hardware Accelerators

Running complex machine learning tasks on edge devices requires specialized AI hardware accelerators, which boost speed & performance, offer greater scalability, maximum security, reliability & efficient data management.

VPU (Vision Processing Unit)

A vision processing unit is a sort of microprocessor aimed at accelerating machine learning and AI algorithms. It balances Edge AI workload with high efficiency and supports tasks like image processing, which is like a video processing unit used with neural networks. It works on low power and high-performance precision.

GPU (Graphical Processing Unit)

An electronic circuit capable of producing graphics for display on an electronic device is referred to as a GPU. It can process multiple data simultaneously, making them ideal for machine learning, video editing, and gaming applications. With their ability to perform complex machine learning tasks, they are being extensively used in mobiles, tablets, workstations, and gaming consoles nowadays.

TPU (Tensor Processing Unit)

Google introduced the Tensor Processing Unit (TPU), an ASIC for executing Machine Learning (ML) algorithms based on neural networks. It uses less energy and operates more efficiently. Google Cloud Platform with TPUs is a good choice for ML applications that don’t require a lot of cloud infrastructure.

Applications of Edge AI across industries

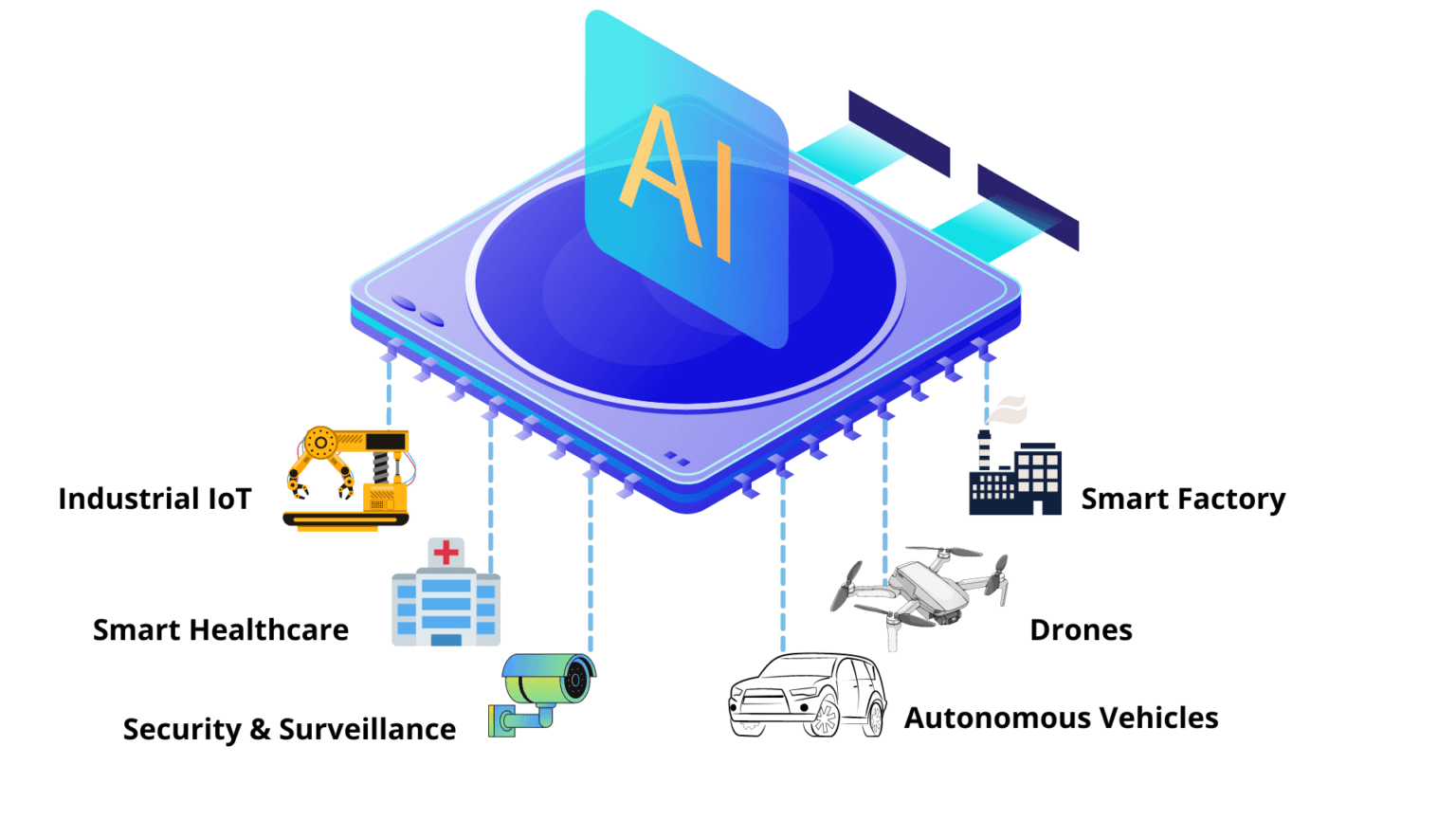

Smart Factories

Edge AI can be applied for predictive maintenance belonging to the equipment industry, by which edge devices can perform analysis on stored data to identify scenarios wherein a failure might occur before the actual failure happens.

Autonomous Vehicles

Self-driving vehicles are one of the best examples of incorporating edge AI technology into the automobile industry, where the integration helps detection and identification of objects thereby eliminating chances for accidents. It aids in avoiding collision with pedestrians/other vehicles, detecting roadblocks, which requires immediate real-time data processing, as plenty of lives are at stake.

Edge AI

Industrial IoT

With the enablement of Computer Vision to Industrial IoT, visual inspections can be done effortlessly without much human intervention thereby increasing operational efficiency and improving the productivity in assembly lines.

Smart Healthcare

Edge Artificial intelligence can help in the healthcare industry via wearables enhancing surveillance of a patient’s health and forecasting early disorders. These details can also be used to provide patients with effective treatments in real-time. Patient data can be secured with HIPAA compliance in place.

Benefits of using Machine Learning on Edge

Higher Scalability

As the demand for the number of interconnected IoT devices is on the rise across industries, Edge AI is becoming an absolute choice due to its efficient and timely data processing without relying heavily on a cloud-based centralized network.

Data Protection & Security

Since Edge devices are not completely dependent on cloud resources, attackers cannot bring down the whole cloud data center/server system to a standstill point.

Low Operational Risks

Since Edge AI is based on a distributed model, in case of failure it will not affect the entire system chain, as in the case of cloud, which is based on a centralized model. The failure of individual edge devices will not pose a huge threat to the entire system.

Reduced Latency Rate

With the implementation of Edge AI, the computation can be performed in milliseconds. This is possible as there is no need to send data to the cloud for initial processing, thereby saving time and reduction of latency in the data processing.

Cost-effectiveness

Edge AI saves a lot of bandwidth, as the transfer of data is minimized. This also reduces the capacity requirements for cloud services which makes Edge AI a cost-effective solution, when compared to cloud-based ML solutions.

In several instances, machine learning models are complex and quite big. In such situations, it becomes extremely difficult to shift these models to compact edge devices. Without proper precautions if efforts are put to reduce the complexity of the algorithms, the processing perfection will take a toll, and also, the computation power will become limited. Hence at the initial development stage, it’s crucial to evaluate all the failure points. Most priority should be given to testing the trained model perfectly on different types of devices and operating systems.

At MosChip, we provide machine learning services and solutions with expertise on edge platforms (TPU, RPi), NN compiler for the edge, and tools like TensorFlow, TensorFlow Lite, Docker, GIT, AWS deepLens, Jetpack SDK, and many more targeted for domains like automotive, Multimedia, Industrial IoT, Healthcare, Consumer, and Security-Surveillance. MosChip can help businesses to build high-performance edge ML solutions like object/lane detection, face/gesture recognition, human counting, key-phrase/voice command detection, and more across various platforms. Our team of experts has years of experience working on various edge platforms, cloud ML platforms, and ML tools/ technologies.

About MosChip:

MosChip has 20+ years of experience in Semiconductor, Embedded Systems & Software Design, and Product Engineering services with the strength of 1300+ engineers.

Established in 1999, MosChip has development centers in Hyderabad, Bangalore, Pune, and Ahmedabad (India) and a branch office in Santa Clara, USA. Our embedded expertise involves platform enablement (FPGA/ ASIC/ SoC/ processors), firmware and driver development, BSP and board bring-up, OS porting, middleware integration, product re-engineering and sustenance, device and embedded testing, test automation, IoT, AIML solution design and more. Our semiconductor offerings involve silicon design, verification, validation, and turnkey ASIC services. We are also a TSMC DCA (Design Center Alliance) Partner.

Stay current with the latest MosChip updates via LinkedIn, Twitter, FaceBook, Instagram, and YouTube