Machine learning (ML) models have become increasingly popular in many kinds of industries due to their ability to make accurate and data-driven predictions. However, developing an ML model is not a one-time process. It requires continuous improvement to ensure reliable and accurate predictions. This is where ML testing plays a critical role as we are seeing massive growth in the global artificial intelligence and machine learning market. The worldwide AIML market was valued at approximately $19.20 billion in 2022 and is anticipated to expand from $26.03 billion in 2023 to an estimated $225.91 billion by the year 2030 with a Compound Annual Growth Rate (CAGR) of 36.2% stated by Fortune Business Insights. In this article, we will explore the importance of ML testing, the benefits it provides, the various types of tests that can be conducted, and the tools and frameworks available to streamline the testing process.

What is Machine Learning (ML) testing, and why it is important?

The process of evaluating and assessing the performance of Machine Learning (ML) models, which is responsible for accuracy and reliability, is known as Machine learning (ML) testing. ML models are algorithms designed to make independent decisions based on patterns in data. Testing ML models is essential to ensure that they function as intended and produce dependable results when deployed in real-world applications. Testing of ML models involves various types of assessments and evaluations to verify the quality and effectiveness of these models. These assessments aim to identify and mitigate issues, errors, or biases in the models, ensuring that they meet their intended objectives.

Machine learning systems operate in a data-driven programming domain where their behaviour depends on the data used for training and testing. This unique characteristic underscores the importance of ML testing. ML models are expected to make independent decisions, and for these decisions to be valid, rigorous testing is essential. Good ML testing strategies aim to reveal any potential issues related to design, model selection, and programming to ensure reliable functioning.

How to Test ML Models?

Testing machine learning (ML) models is a critical step in the machine learning solution development and deployment of robust and dependable ML model. To understand the process of ML testing, let’s break down the key components of both offline and online testing.

Offline Testing

Offline testing is an essential phase that occurs during the machine learning model development and training of an ML model. It ensures that the model is performing as expected before it is deployed into a real-world environment. Here’s a step-by-step breakdown of the offline testing process.

The process of testing machine learning models involves several critical stages. It commences with requirement gathering, where the scope and objectives of the testing procedure are defined, ensuring a clear understanding of the ML system’s specific needs. Test data preparation follows, where test inputs are prepared. These inputs can either be samples extracted from the original training dataset or synthetic data generated to simulate real-world scenarios.

AIML systems are designed to answer questions without pre-existing answers. Test oracles are methods used to determine if any deviations in the ML system’s behaviour are problematic. Common techniques like model evaluation and cross-referencing are employed in this step to compare model predictions with expected outcomes. Subsequently, test execution takes place on a subset of data, with a vigilant eye on test oracle violations. Any identified issues are reported and subjected to resolution, often validated using regression tests. Finally, after successfully navigating these offline testing cycles, if no bug is identified the offline testing process ends. The ML model is then ready for deployment.

Online Testing

Online testing occurs once the ML system is deployed and exposed to new data and user behaviour in real-time. It aims to ensure that the model continues to perform accurately and effectively in a dynamic environment. Here are the key components of online testing.

- Runtime monitoring

- User response monitoring

- A/B testing

- Multi-Armed Bandit

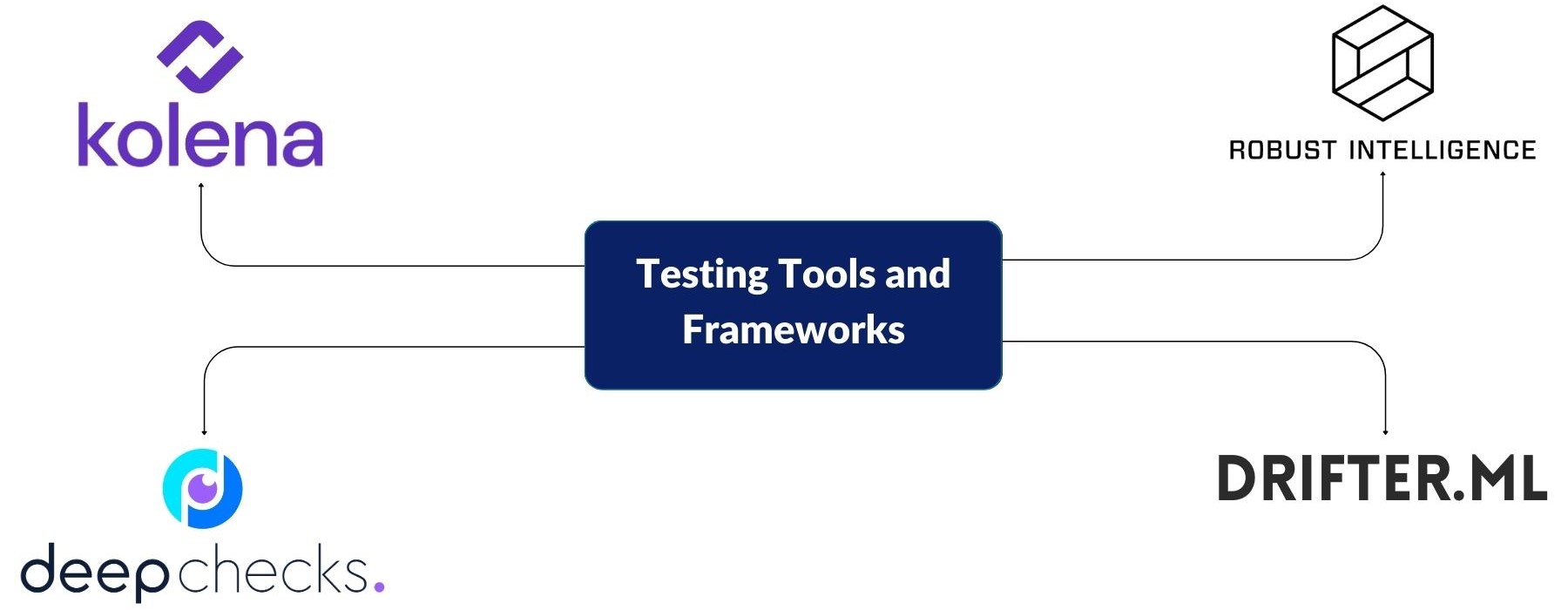

Testing tools and frameworks

Several tools and frameworks are available to simplify and automate ML model testing. These tools provide a range of functionalities to support different aspects of testing

ML testing tools and frameworks

Deepchecks

It is an open-source library designed to evaluate and validate deep learning models. It offers tools for debugging, and monitoring data quality, ensuring robust and reliable deep learning solutions.

Drifter-ML

Drifter-ML is a ML model testing tool specifically written for the scikit-learn library focused on data drift detection and management in machine learning models. It empowers you to monitor and address shifts in data distribution over time, essential for maintaining model performance.

Kolena.io

Kolena.io is a python-based framework for ML testing. It focuses on data validation that ensure the integrity and consistency of data. It allows to set and enforce data quality expectations, ensuring reliable input for machine learning models.

Robust Intelligence

Robust Intelligence is a suite of tools and libraries for model validation and auditing in machine learning. It provides capabilities to assess bias and ensure model reliability, contributing to the development of ethical and robust AI solutions.

ML model testing is a crucial step in the development process to ensure the reliability, accuracy, and fairness of predictions. By conducting various types of tests, developers can optimize ML models, detect, and prevent errors and biases, and improve their robustness and generalization capabilities – enabling the models to perform well on new, unseen data beyond their training set. With the availability of testing tools and frameworks, the testing process can be streamlined and automated, improving efficiency and effectiveness. Implementing robust testing practices is essential for the successful deployment and operation of ML models, contributing to better decision-making and improved outcomes in diverse industries.

MosChip Company provides Quality Engineering Services for embedded software, device, product, and end-to-end solution testing. This helps businesses create high-quality solutions that enable them to compete successfully in the market. Our comprehensive QE services include machine learning applications and platforms testing, dataset and feature validation, model validation and performance benchmarking, embedded and product testing, DevOps, test automation, and compliance testing.

About MosChip

MosChip Technologies Limited is a publicly-traded semiconductor and system design services company headquartered in Hyderabad, India, with 1000+ engineers located in Silicon Valley-USA, Hyderabad, and Bengaluru. MosChip has over a twenty-year track record in designing semiconductor products and SOCs for computing, networking, and consumer applications. Over the past 2 decades, MosChip has developed and shipped millions of connectivity ICs. For more information, visit moschip.com

Stay current with the latest MosChip updates via LinkedIn, Twitter, FaceBook, Instagram, and YouTube